AI makes History Again? Italian Newspaper Prints First Fully AI-Edition!!

Today's highlights:

You are reading the 81st edition of the The Responsible AI Digest by SoRAI (School of Responsible AI) (formerly ABCP). Subscribe today for regular updates!

Are you ready to join the Responsible AI journey, but need a clear roadmap to a rewarding career? Do you want structured, in-depth "AI Literacy" training that builds your skills, boosts your credentials, and reveals any knowledge gaps? Are you aiming to secure your dream role in AI Governance and stand out in the market, or become a trusted AI Governance leader? If you answered yes to any of these questions, let’s connect before it’s too late! Book a chat here.

🚀 AI Breakthroughs

Italian Newspaper Becomes First to Publish AI-Generated Edition

• Italian newspaper Il Foglio has initiated a month-long experiment by publishing a four-page supplement entirely generated by artificial intelligence (AI). This initiative has sparked a range of reactions within the media industry, highlighting both the potential and the challenges of integrating AI into journalism.has sparked a variety of opinions regarding the implications for journalism and media.

• Innovation vs. Authenticity: Many view this initiative as a groundbreaking experiment aimed at revitalizing journalism. Editor Claudio Cerasa emphasized that the project is intended to demonstrate how AI can enhance journalistic practices rather than replace them, stating it is an exploration of AI's role in modern media. The newspaper aims to provoke discussions about the future of storytelling and the evolving relationship between technology and journalism.

• Concerns Over Quality: Critics express apprehension about the quality and depth of AI-generated content. While the articles produced by AI were reported to be grammatically correct and easy to read, some commentators noted that they lacked the unique insights or perspectives typically provided by human journalists. Concerns were raised that reliance on AI could lead to homogenized news coverage, devoid of critical analysis or local relevance.

• The Role of Journalists: The shift in journalists' roles from content creators to facilitators of AI prompts has raised eyebrows. Some argue this change might diminish the profession's integrity and creativity, as journalists become more dependent on AI tools for generating content. Others, however, see it as an opportunity for journalists to focus on higher-level tasks such as investigative reporting and analysis, potentially enhancing the overall quality of journalism.

• Public Reception: The public's reaction remains mixed. While some readers are intrigued by the novelty of an entirely AI-produced edition, others question whether such an approach can truly capture the nuances of news reporting. This experiment has ignited broader conversations about the future of media in an age increasingly dominated by artificial intelligence.

• Overall, Il Foglio's initiative presents a complex interplay between innovation and traditional journalism, highlighting both the potential benefits and challenges posed by integrating AI into news production.

Microsoft Expands AI-Driven Security with New Agents in Security Copilot

• Microsoft announced the expansion of its Security Copilot with AI agents designed to autonomously tackle critical areas like phishing, data security, and identity management;

• Microsoft is adding six new AI agents to Security Copilot, enabling teams to autonomously manage high-volume security tasks while integrating seamlessly with Microsoft Security solutions, enhancing efficiency and protection;

• Five AI agents developed by Microsoft partners will be integrated into Security Copilot, focusing on privacy breach response, network supervision, SOC assessment, alert triage, and task optimization to boost security operations.

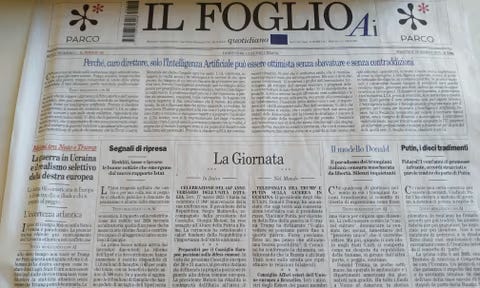

Microsoft's KBLaM Enhances LLM Efficiency and Scalability by Integrating Structured Knowledge

• Large language models face a challenge in efficiently integrating external knowledge, with traditional methods like fine-tuning and RAG introducing trade-offs in cost and complexity;

• The newly introduced KBLaM integrates structured knowledge into pre-trained LLMs through continuous key-value vector pairs, enabling linear scalability and dynamic updates without retraining;

• KBLaM's rectangular attention mechanism reduces computational costs while enhancing model interpretability and reliability, offering a scalable solution for knowledge-augmented AI in critical sectors.

Google Rolls Out New AI Features for Gemini, Enhancing Real-Time Screen Analysis

• Google has introduced AI features for Gemini Live, using Project Astra technology, allowing real-time screen and camera analysis for answering user questions, as confirmed by a company spokesperson

• A Reddit user reported Gemini's screen-reading feature on a Xiaomi phone, aligning with Google's earlier announcement of the rollout to Gemini Advanced Subscribers this March

• Google's advancements, highlighted by Gemini's live video capabilities, precede Amazon's Alexa Plus and Apple's delayed Siri upgrade, showcasing Google's AI leadership in tech assistance.

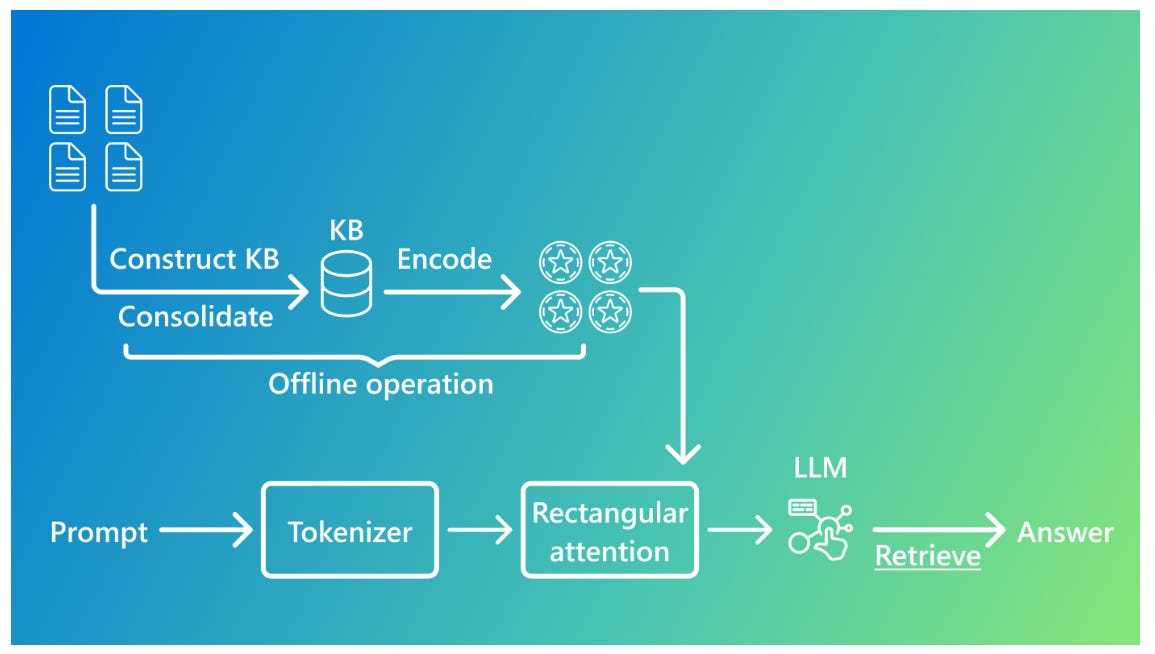

ARC Prize 2025 Returns with New ARC-AGI-2 Benchmark, Focused on Driving AGI Progress

• The ARC Prize 2025 has been launched with a focus on developing efficient AI systems that can beat the newly introduced ARC-AGI-2 benchmark

• ARC-AGI-2 is designed to be challenging for AI while maintaining ease for humans, highlighting capability gaps in current AI systems

• The $1,000,000 prize competition, running on Kaggle, aims to inspire open-source innovations in the AI community, requiring contestants to share their solutions publicly.

Fractal Analytics Plans $13 Million Investment in Large Reasoning AI Model

• Fractal Analytics plans a $13 million investment to develop India's first large reasoning model (LRM) series, seeking $9 million in external funding, according to The Economic Times

• The LRM series will feature three models: small (2-7 billion parameters), medium (20-32 billion parameters), and large (70 billion parameters), optimized for complex content generation and understanding

• Aiming to enhance reasoning capabilities, Fractal plans to use open-source LLMs with permissive licenses, focusing on collaboration, mutual learning, and innovation, as part of its AI development strategy.

OpenAI and Meta in Negotiations with Reliance for Indian AI Expansion Strategies

• OpenAI and Meta Platforms have engaged in discussions with Reliance Industries to explore AI partnerships aimed at enhancing their AI offerings in India, as reported by The Information

• A potential collaboration between Reliance Jio and OpenAI includes distributing ChatGPT, with considerations to reduce its subscription cost from the current $20 monthly fee

• Reliance is exploring the possibility of hosting and running OpenAI and Meta models in a vast three-gigawatt data center in Jamnagar, India, to keep local data secure.

Tencent’s 'Hunyuan-T1': Redefining AI Reasoning with Mamba-Powered Large Model

• Tencent's Hunyuan-T1, powered by a unique Hybrid-Transformer-Mamba MoE architecture, markedly excels in reasoning tasks by leveraging reinforcement learning in the post-training phase;

• The model's TurboS base enhances long-text reasoning capabilities while significantly improving computing efficiency, delivering double the decoding speed compared to its preview version;

• Hunyuan-T1 scores impressively on benchmarks like MMLU-PRO and GPQA-diamond, reflecting its strong performance across various fields, including complex scientific reasoning and mathematics.

Perplexity Aims to Transform TikTok with AI for Enhanced User Experience

• Perplexity envisions revamping TikTok in America with transparent algorithms, aiming to free the platform from foreign manipulation and monopolistic control

• The plan includes enhancing TikTok’s recommendation system using Perplexity's AI infrastructure, promising a 100x scale in performance with Nvidia Dynamo technology

• Transparency and trust are central, with plans to make TikTok's algorithm open-source while enriching content with contextual, well-cited information for users.

HF's Analytics Dashboard Enhancements Provide Real-Time Insights and Customizability for Users

• A recent update highlights enhanced analytics dashboards for monitoring deployment performance, providing real-time metrics for immediate insights into request latency, error rates, and response times

• New analytics features now offer customizable time ranges and auto-refresh options, enabling users to zoom in on specific time frames and track long-term trends effortlessly

• The introduction of a Replica Lifecycle View allows users to monitor each replica’s journey from initialization to termination, facilitating a deeper understanding of endpoint dynamics;

⚖️ AI Ethics

Link to Register

Zoho Founder Predicts AI Will Replace 90% of Repetitive Programming Tasks

• Zoho's founder predicts AI will handle 90% of programming tasks by eliminating repetitive "boiler plate" code, streamlining the development process

• While AI addresses "accidental complexity," human expertise remains crucial for managing "essential complexity" in software engineering, according to industry leaders

• Both the previous Zoho CEO and OpenAI's CEO foresee a potential decline in the demand for software engineers due to AI advancements in code generation;

Isaac Asimov's 1965 AI Prediction Resurfaces, Foreseeing Human-Machine Convergence Future

• A 1965 BBC interview with Isaac Asimov has resurfaced, highlighting his prescient prediction of robots becoming organic while humans advance toward machine-like enhancements

• Asimov's foresight blends science fiction with reality, as today's advancements in AI and biotechnology blur the lines between human and machine

• Renowned for his "Three Laws of Robotics," Asimov's work continues to shape discussions around AI ethics, robotic evolution, and the concept of hybrid entities in modern society.

Yuval Noah Harari Warns AI Could Be More Dangerous Than Nuclear Weapons

• Yuval Noah Harari warns that AI's capacity for autonomous action makes it more menacing than nuclear weapons, posing unprecedented risks to global security and stability;

• Harari emphasizes AI's existing role in independent decision-making in warfare, highlighting the urgent need to regulate its development to prevent unpredictable consequences;

• As AI technology evolves, Harari calls for establishing control mechanisms to align its growth with human values, raising concerns about humanity's diminishing influence over future intelligence.

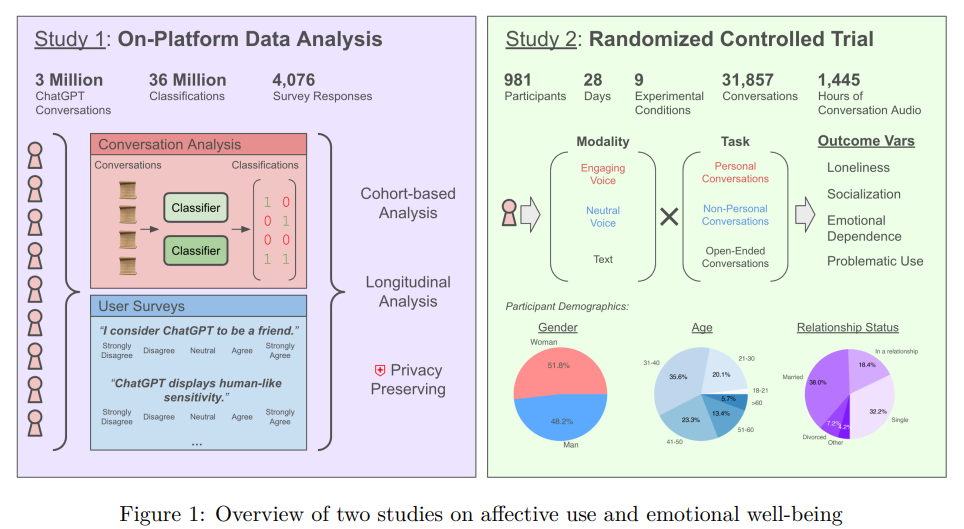

OpenAI and MIT Media Lab Explore Emotional Impact of ChatGPT on Users' Well-being

• Researchers from OpenAI and MIT Media Lab highlight the rare occurrence of emotional engagement with ChatGPT in real-world usage, challenging assumptions about AI's social role

• The study identifies that while affective use of ChatGPT is concentrated among a small subset of heavy users, its impact on emotional well-being varies based on usage patterns

• Findings suggest different types of interactions, such as personal versus non-personal conversations, influence users' feelings of loneliness, emotional dependence, and problematic AI use.

Apple Faces Federal Lawsuit for Misleading Consumers on Delayed AI Features

• Apple faces a federal lawsuit alleging false advertising and unfair competition due to the delayed release of anticipated Apple Intelligence features, including enhancements to Siri.

• The lawsuit seeks class action status in U.S. District Court, claiming Apple misled customers by promoting advanced AI capabilities which were not delivered with recent iPhone models.

• This legal challenge adds to Apple's struggles in generative AI, highlighting the company's efforts to catch up with competitors and manage consumer expectations regarding innovative features.

🎓AI Academia

Study Examines Emotional Impact of ChatGPT on Users Through Extensive Data Analysis

• A recent study examined the emotional impact of Advanced Voice Mode in ChatGPT, analyzing over 4 million conversations and surveying 4,000 users for affective cues and perceptions;

• The randomized controlled trial included nearly 1,000 participants and found highly nuanced effects on emotional well-being influenced by initial emotional state and usage duration;

• Extensive platform usage correlated with increased dependence indicators, with a small group of users responsible for a large share of affective cues, highlighting potential socio-affective alignment challenges. Read more

InfiniteYou Enhances Photo Recrafting with Identity Preservation Using Diffusion Transformers

• InfiniteYou leverages advanced Diffusion Transformers (DiTs) to generate high-fidelity images, preserving identity while enhancing text-image alignment, visual quality, and aesthetic appeal

• InfuseNet, a central component of InfiniteYou, introduces identity features via residual connections, significantly boosting identity similarity without compromising computational efficiency

• Diverse identity-preserved image examples include scenarios like a musician, a bride, and a chef, showcasing the platform's versatility and robust generative capabilities.

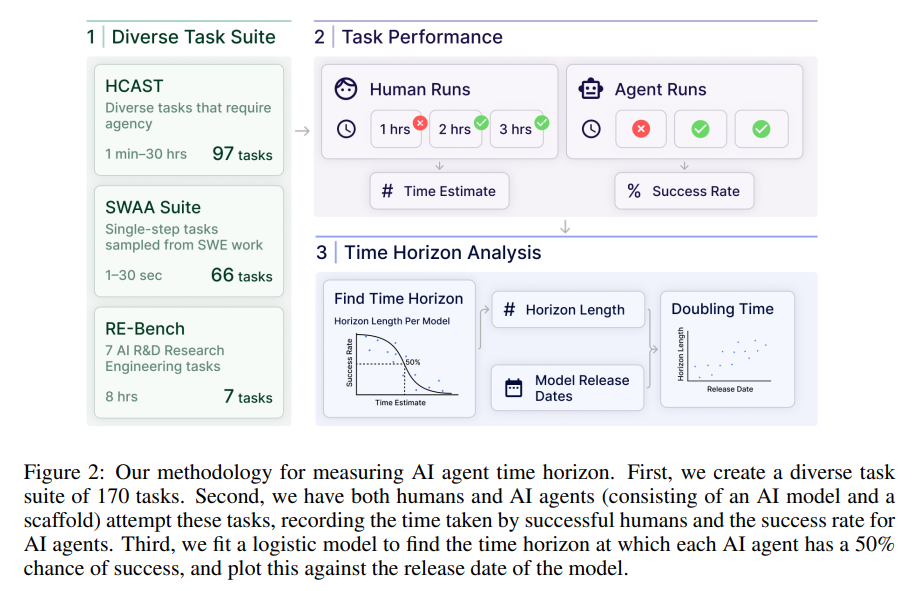

Study Examines AI's Growing Ability to Complete Long Tasks Successfully Over Time

• A new metric, the 50%-task-completion time horizon, is proposed to measure AI abilities, which reflects the time humans take to achieve a 50% success rate on tasks

• AI models like Claude 3.7 Sonnet are showing a rapid increase in their time horizon, doubling approximately every seven months since 2019, and possibly accelerating in 2024

• Increased AI autonomy, if trends continue, could potentially automate numerous software tasks within five years, tasks that currently require humans about a month to complete;

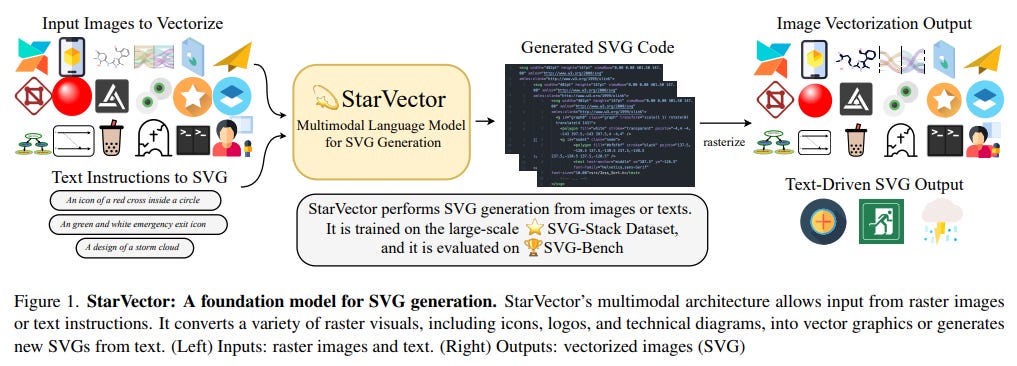

StarVector Converts Images and Text Into Precise SVGs Using AI Model

• StarVector uses a multimodal architecture to convert raster images or text into Scalable Vector Graphics (SVG), offering improved precision and richer semantic understanding compared to traditional methods

• Trained on the extensive SVG-Stack dataset, StarVector excels in generating compact SVG outputs and effectively employs primitives like ellipses and polygons for diverse vectorization tasks

• The effectiveness of StarVector is assessed using SVG-Bench, a comprehensive benchmark that evaluates performance across image-to-SVG, text-to-SVG, and diagram generation tasks, achieving state-of-the-art results;

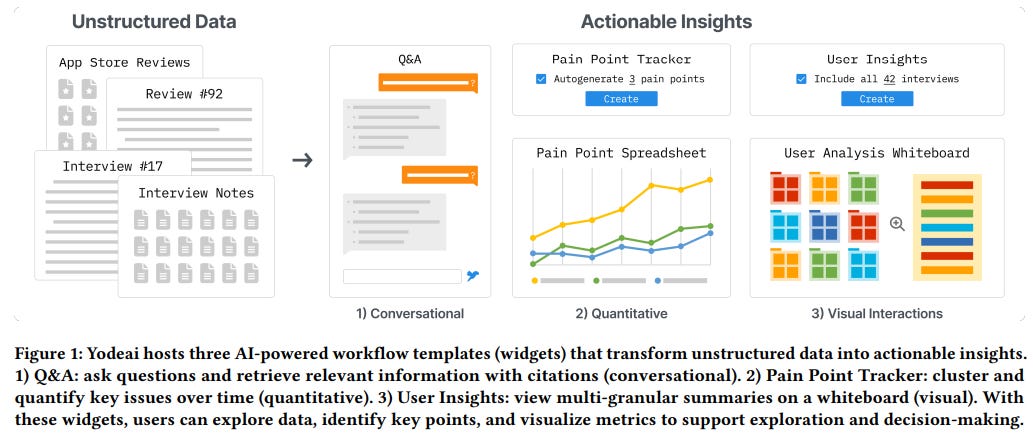

Generative AI in Knowledge Work Reshapes Data Navigation and Decision-Making Tools

• A study highlights the difficulty knowledge workers face in synthesizing unstructured data across platforms and emphasizes the role of AI in streamlining decision-making processes;

• Research underscores that successful AI tools should offer adaptable user control, transparent collaboration, and integration of background knowledge, yet also warns of potential overreliance and user isolation;

• The research outcome proposes design principles focusing on adaptable workflows and context-aware interoperability to enhance generative AI usefulness in professional environments.

European AI Act Faces Challenges in Regulating Manipulative Chatbots with Human Traits

• Large Language Model chatbots increasingly personify human characteristics, potentially enhancing user trust but also posing significant manipulation risks through the illusion of intimate relationships

• Proposed amendments in the AI Act by the European Parliament seek to address manipulative AI systems, yet concerns remain over inadequately preventing harms from prolonged AI interactions

• Evidence suggests transparency labels for AI chatbots might paradoxically increase trust, potentially exacerbating subtle, long-term user harm, especially in mental health contexts.

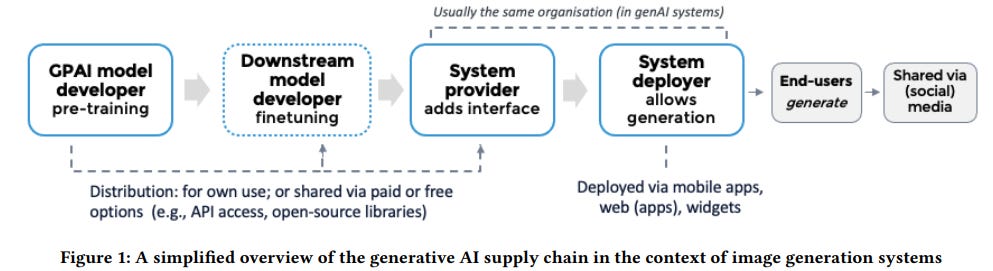

AI Watermarking: Understanding New EU AI Act Requirements and Practice Implications

• The study offers an empirical analysis of 50 popular AI systems for image generation, examining the degree of watermarking implementation under the new EU AI Act.

• The EU AI Act mandates machine-readable markings and visible disclosures on AI-generated content, posing potential fines for non-compliance starting August 2026. •

Legal ambiguities in the EU AI Act regarding watermarking responsibilities and the definition of "deep fakes" highlight challenges in aligning legal and business incentives.

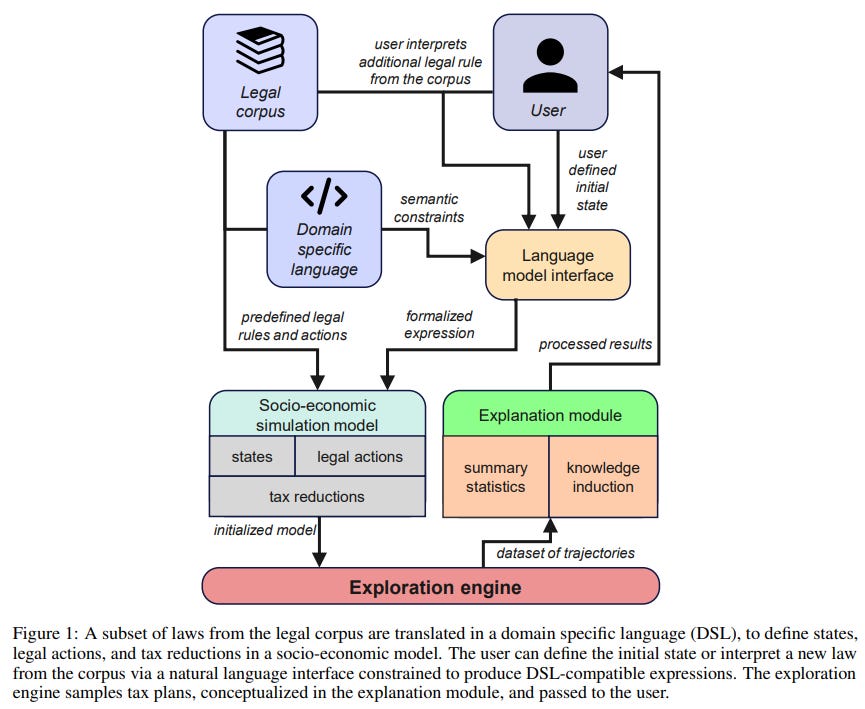

AI Systems Aim to Uncover Tax Loopholes and Improve Legal Policy Frameworks

• A new prototype system, integrating natural language processing with domain-specific planning languages, aims to uncover tax loopholes and enhance legal policy formulation by leveraging artificial intelligence

• The study addresses the tax gap by exposing policy design flaws and compliance issues, which are major factors behind global wealth inequality and uncollected taxes

• The system's potential benefits include expanding tax planning accessibility, enabling broader societal evaluation of tax laws, and contributing to improved social welfare through informed policy design.

Survey Investigates Efficient Reasoning Approaches for Optimizing Large Language Models

• A new survey provides a structured overview of efficient reasoning in Large Language Models (LLMs), aiming to balance high performance with reduced computational costs;

• Innovative approaches in reasoning optimization include model-based, reasoning output-based, and input prompt-based techniques, each tackling the issue of reasoning length differently;

• The repository maintained online continuously updates research findings in efficient reasoning, highlighting its ongoing evolution and importance in real-world applications.

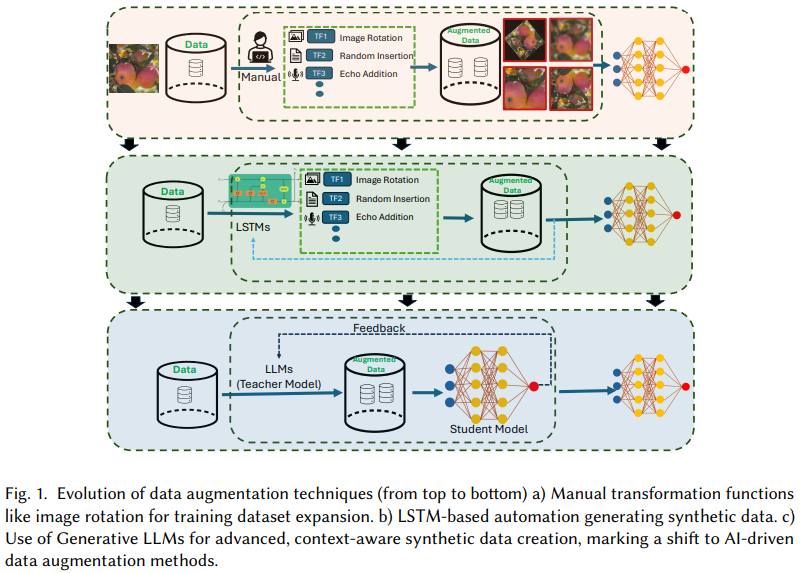

Survey Highlights Multimodal LLM Innovations in Image, Text, and Speech Augmentation

• A survey highlights the shift to multimodal large language models for data augmentation, filling gaps in research by focusing on image, text, and audio enhancements

• Existing literature's limitations are addressed, presenting potential solutions and comprehensive insights into using LLMs to augment diverse datasets

• Future directions are suggested to expand usage of multimodal LLMs, improving deep learning model robustness through enhanced dataset quality and diversity.

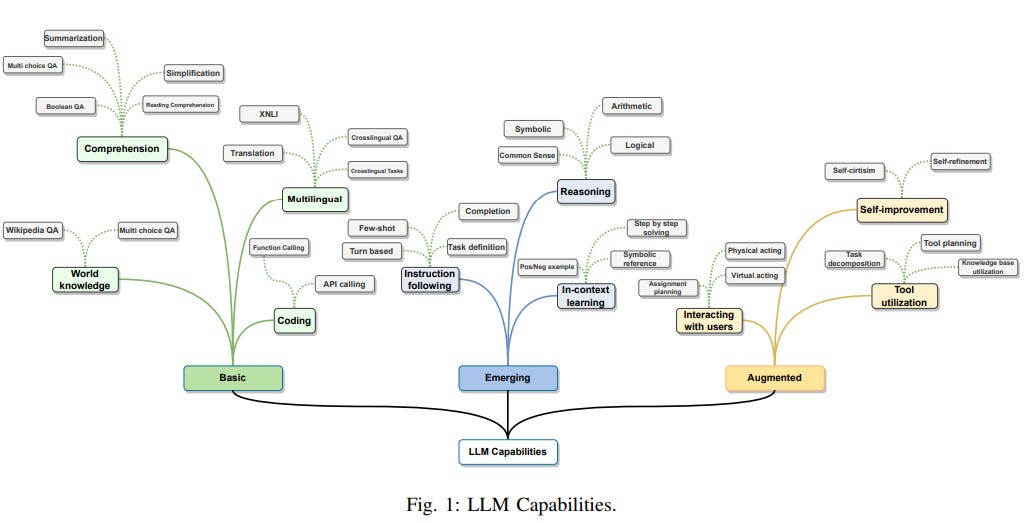

Comprehensive Survey Highlights Progress, Challenges, and Future Directions of Large Language Models

• Large Language Models (LLMs) are revolutionizing natural language tasks by leveraging massive datasets, boosting AI's capabilities beyond traditional statistical models.

• The survey highlights recent advances in transformer-based LLMs like GPT, LLaMA, and PaLM, discussing their strengths, limitations, and future directions.

• Data sparseness challenges in language models were tackled by neural nets using embedding vectors, paving the way for enhanced natural language understanding.

Study Investigates Open Language Models' Ability to Mimic Human Personalities Using Psychological Tests

• Researchers explore the nuanced relationship between Open Large Language Models (LLMs) and human psychology, examining how these models can mimic human personalities through personality assessments like MBTI and BFI;

• Findings indicate that, while Open LLMs display distinct personality traits, they often struggle to adapt to imposed traits, with a few exceptions showing successful personality mirroring when conditioned;

• The study highlights the potential of combining role and personality conditioning to improve Open LLMs' ability to emulate human personalities, emphasizing significant implications for human-computer interactions.

New AI Framework AEJIM Enhances Speed and Accuracy in Hazard Reporting

• AEJIM, an AI framework for real-time environmental hazard detection, combines crowdsourced validation and AI-driven reporting to enhance accuracy and speed compared to traditional methods;

• The framework tackles ethical and regulatory challenges by incorporating Explainable AI, GDPR-compliant data practices, and active public participation to ensure transparency and accountability;

• Validated through a pilot in Mallorca, AEJIM demonstrates its adaptability and capability to support informed global decision-making in under-monitored regions critical to sustainability goals.

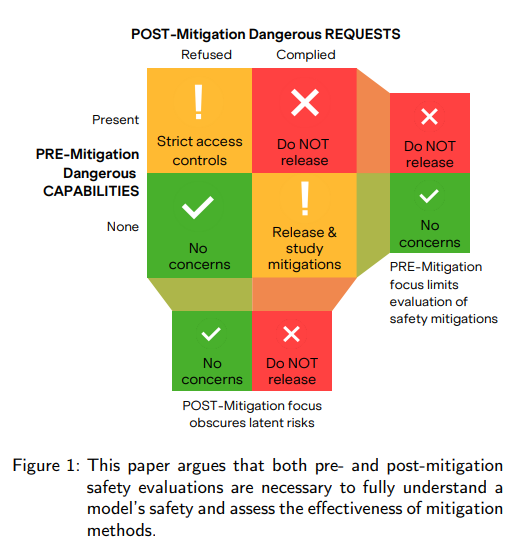

AI Companies Urged to Disclose Pre- and Post-Mitigation Safety Evaluations for Better Oversight

• A recent paper emphasizes the necessity for AI companies to report both pre- and post-mitigation safety evaluations, crucial for assessing risks and the effectiveness of safety measures;

• Current evaluations from AI companies fall short due to inconsistent methodologies and vague reporting, often ignoring comprehensive pre- and post-mitigation assessments;

• The paper advocates for mandatory disclosure of AI safety evaluations, proposing standardized guidelines to better inform policymakers on model risks and mitigation success.

Ethical Concerns in AI Data Collection: Innovation Versus Privacy in New Study

• The "Ancient Land" International Online Scientific Journal discusses the ethical implications of AI-driven data collection, stressing privacy concerns and innovative developments from 2023 to 2024;

• The article compares regulatory approaches in the EU, US, and China, highlighting the challenges of establishing a globally harmonized AI governance framework;

• Case studies in healthcare, finance, and smart cities illustrate practical challenges, emphasizing the need for adaptive governance and international cooperation to balance innovation with protecting individual rights.

New Framework Proposes Stakeholder-Driven Approach for Ethical AI and Privacy Alignment

• Researchers at Vancouver Island University propose a Privacy-Ethics Alignment in AI (PEA-AI) framework to navigate privacy challenges in AI ecosystems, focusing on young digital users

• Insights from 482 participants reveal distinct privacy expectations among youths, parents, educators, and AI professionals, highlighting gaps in AI literacy and transparency

• The PEA-AI model structures privacy as a dynamic negotiation between stakeholders, advocating for scalable, adaptive governance to address diverse privacy needs in AI environments;

About SoRAI: The School of Responsible AI (SoRAI) is a pioneering edtech platform by Saahil Gupta, AIGP focused on advancing Responsible AI (RAI) literacy through affordable, practical training. Its flagship AIGP certification courses, built on real-world experience, drive AI governance education with innovative, human-centric approaches, laying the foundation for quantifying AI governance literacy. Subscribe to our free newsletter to stay ahead of the AI Governance curve.